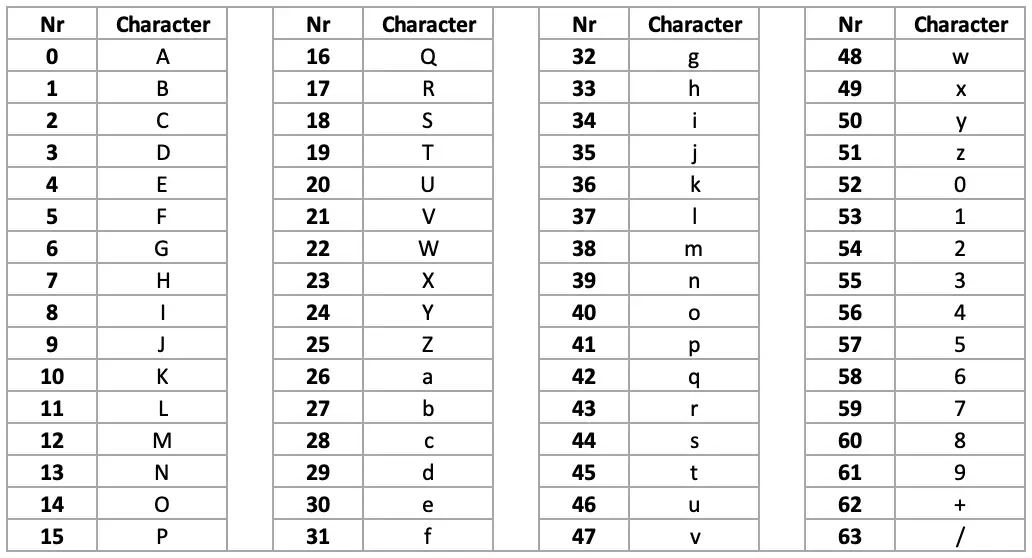

I’ll try to explain the data another way: What the numbers tell me is the following: if I have random (or hard to compress) data to store in the code section of my cart (for instance, a compressed image), and if my only concern is compressed code size, should I store it as base64, or hex, or binary, or something else? What I measure is how pathological the compression becomes depending on the alphabet size. also this is about the new compression algorithm (though the very first graph also shows how the old one behaved).we are in a case where compression utterly fails the whole thread is about storing random data, which by definition cannot be compressed, so the ratio is always below 1.I think it'd be more useful to actually generate carts with random data in them and average a set of compressed export sizes per alphabet sorry, a few things were not clear it seems: I'm not entirely sure your python script is really simulating the two compression types properly. Is this correct?Īlso, didn't zep completely change the compression method? It used to be a base64-optimized lzss-style encoder, but I understood the new one to be something that throws away past-data lookup in favor of a dynamic heuristic that constantly shuffles its concept of ideal octets so that it can refer to the most likely ones with very few bits. Like, if you started with 100 bytes and ended up with 75 after compression, the ratio would be (100-75)/100 = 0.25. I have to guess you meant to say it's more like "amount of bytes saved divided by amount of incoming bytes".

You say it's "compressed size divided by information size", but also say it's better when it's close to 1, which would only happen if compression utterly failed and the compressed data was the same size as the information size.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed